From Pilots to Performance: Why Innovation Needs an Operating Model

Updated March 2026

Most organizations don't lack innovation activity.

They lack an innovation operating model.

Ideas are generated. Technologies are explored. Pilots are launched. Progress is visible in quarterly updates and portfolio reviews. And yet outcomes remain inconsistent. Some initiatives scale. Many stall. Most never reach a clear conclusion — they simply lose momentum until nobody is sure whether they are still active or quietly abandoned.

This is not a failure of effort or intent. It is the predictable result of treating innovation as a collection of projects rather than a managed system. And it is the most expensive problem in enterprise innovation — because it happens after significant investment has already been made.

The Definition

An innovation operating model is the set of shared rules, decision structures, governance mechanisms, and institutional processes that govern how innovation work moves through an organization — from the first market signal through idea capture, evaluation, piloting, and scale — consistently, repeatably, and with measurable results.

It is not a process document. It is not a stage-gate diagram on a slide. It is the living infrastructure that determines whether innovation compounds over time or resets with every new initiative, team change, or leadership priority shift.

Without an operating model, innovation depends on individual heroics. With one, innovation becomes a discipline the organization can practice at scale.

Why Innovation Without an Operating Model Doesn't Scale

In the early stages of an innovation program, structure is rarely needed. A small number of initiatives. Strong individual judgment. High executive attention. Enthusiasm that compensates for the absence of process.

But as activity grows, those conditions disappear.

Portfolios expand across multiple business units. Stakeholders multiply. Timelines compress. The same questions get re-litigated at every gate because nobody captured the rationale from the last discussion. Promising vendors get evaluated again because nobody recorded the prior evaluation. Pilots launch without defined success criteria and end without clear decisions because nobody built the governance that forces a conclusion.

What once felt entrepreneurial begins to feel chaotic. And the response — more tools, more process, more governance layers — typically makes things worse rather than better, because it adds overhead without adding clarity.

The organization does not have a process problem. It has an operating model problem.

Innovation Operating Model vs. Innovation Process — What's the Difference

This distinction matters because most organizations respond to the operating model problem by improving their process — and find that better documentation, more detailed templates, and additional review stages do not produce better outcomes.

A process describes the activities teams perform and the sequence in which they perform them. It tells people what to do and in what order.

An operating model governs how decisions get made — who makes them, on what evidence, at what point, with what accountability for the outcome. It tells people what to decide, why it matters, and what happens next based on the decision.

The difference shows up most clearly at the transition points — the handoffs between stages where context is lost, accountability becomes unclear, and initiatives stall not because of a technology failure but because nobody was responsible for what happened next.

Process describes activity. Operating model governs decisions. Innovation programs that run on process alone produce activity. Innovation programs that run on an operating model produce outcomes.

What an Innovation Operating Model Actually Includes

A functional innovation operating model aligns five things. Most programs have one or two of these. The programs that consistently scale innovation have all five.

1. A defined entry point

How does an initiative enter the innovation system? What triggers a technology scouting engagement? What qualifies an idea for evaluation? What level of business sponsorship is required before resources are committed?

Without a defined entry point, everything enters the system — and the system becomes a backlog rather than a pipeline. The entry point is not a filter for quality. It is a mechanism for routing: connecting each initiative to the right stage of the framework given what is already known about it.

2. Consistent evaluation criteria

How does the organization assess whether an initiative is ready to advance? Is the same framework applied across all initiatives in a category, or does each evaluation reflect the preferences of the evaluator who happened to run it?

Inconsistent evaluation is the most common source of governance failure in enterprise innovation. When similar initiatives receive different assessments based on who evaluated them rather than what the evidence showed, the downstream decisions — which pilots to fund, which vendors to advance, which programs to scale — cannot be defended. And programs that cannot be defended lose executive support.

3. Explicit decision gates with defined outcomes

At what points does the organization make a commitment decision — advance, redirect, pause, or stop? Who owns that decision? What evidence is required to support each outcome?

Decision gates are the governance mechanism that prevents pilot purgatory — the state in which initiatives are neither officially succeeding nor officially failing, consuming resources and management attention without producing a conclusion. A properly designed decision gate makes indefinite extension structurally impossible. The gate requires a decision, and the decision produces a defined next step.

4. Institutional memory that persists across team changes

When a team member leaves, what happens to the evaluation rationale they accumulated? When a vendor reappears two years later, does the organization know what was already found? When a new pilot begins in a technology category that was evaluated before, does the team starting fresh have access to what the prior team learned?

In most organizations the answer to all three is no. Institutional memory lives in people's heads, email archives, and shared drives that nobody maintains. The operating model is only as good as the last person who ran the program — which means it degrades continuously as teams change.

An innovation operating model that captures institutional memory by design — as a workflow output rather than a documentation task — builds organizational intelligence that compounds rather than dissipates.

5. Portfolio visibility and outcome reporting

At any given moment, can leadership see the full portfolio of active innovation initiatives, their current stage, their milestone status, and their trajectory? When a pilot closes, does the outcome feed back into the institutional record in a form that informs future decisions?

Portfolio visibility is not a reporting feature. It is the mechanism by which an organization learns from its own innovation history. Without it, every initiative is evaluated in isolation — which means the organization never gets smarter from the programs it has already run.

Signs Your Innovation Program Needs an Operating Model

The following patterns are diagnostic. Any one of them indicates a structural problem that more activity will not solve.

Pilots that never formally end. Initiatives that are neither officially active nor officially closed — they have simply gone quiet. Nobody has declared them finished because nobody owns the decision to stop.

Repeated evaluations of the same vendors. Different teams evaluating the same company in different business units without any awareness of the duplication. The organization pays twice for the same information and reaches different conclusions both times.

Gate reviews that produce recommendations, not decisions. Reviews that end with "we need more data" or "let's revisit next quarter" rather than a committed direction. The gate has become a meeting, not a mechanism.

Institutional knowledge that walks out the door. When a program manager leaves, the program effectively restarts. The new person cannot access the evaluation rationale, vendor history, or decision context from prior cycles.

Leadership asking what the program has produced. The innovation team cannot answer with evidence — only with activity metrics that do not connect to business outcomes.

Inconsistent evaluation outcomes. Similar initiatives receiving different assessments based on who evaluated them, which business unit sponsored them, or which quarter the evaluation happened to fall in.

If three or more of these patterns are present, the program has an operating model problem. Fixing individual process steps will not resolve it.

How an Innovation Operating Model Connects to a Framework

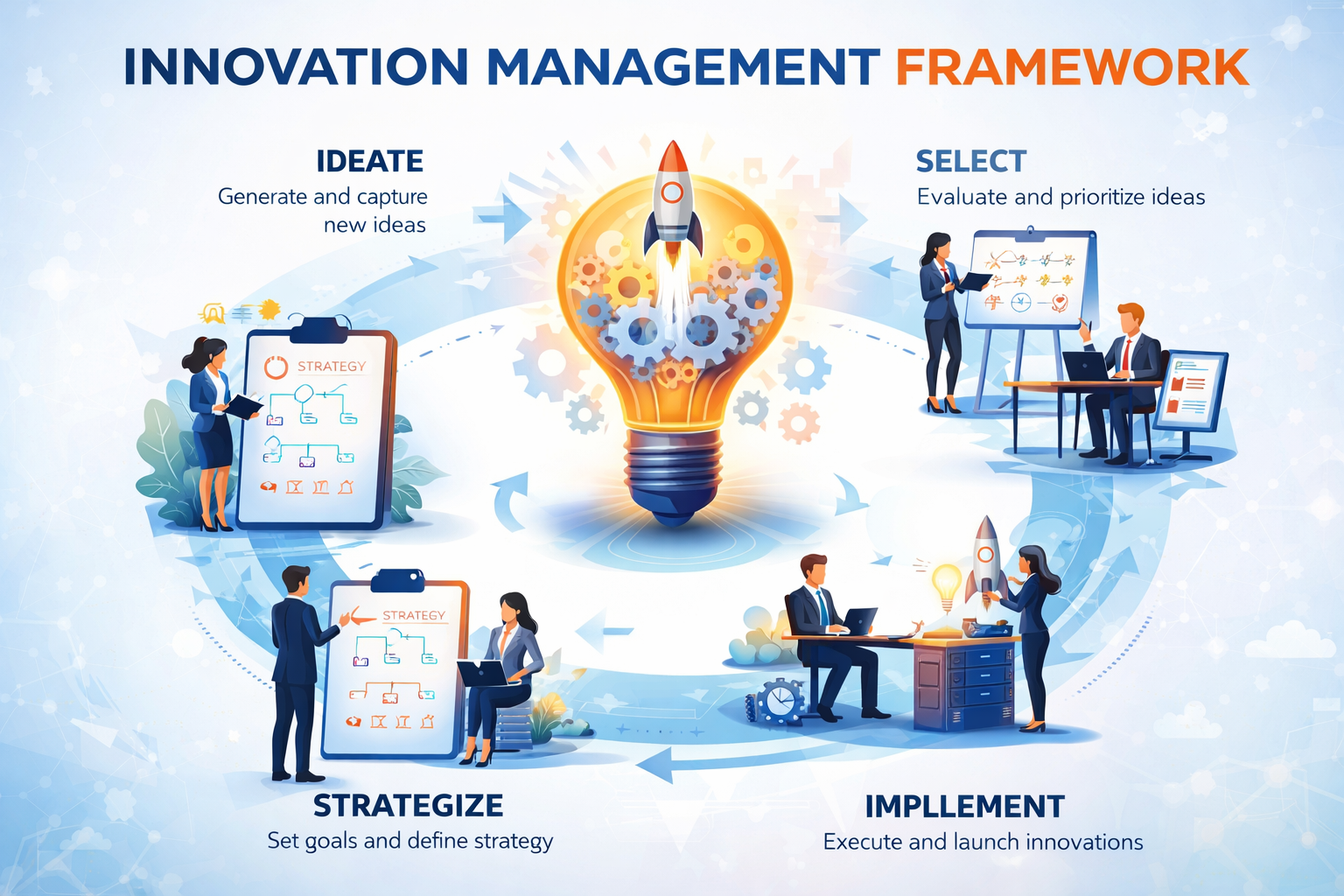

An operating model needs a framework to operate inside. The framework defines the stages. The operating model governs the decisions between them.

The Traction Innovation Framework organizes innovation into eight connected stages — from market intelligence and technology scouting through idea capture, evaluation, pilot management, and scale. Each stage answers a specific question. Each decision gate connects the answer to the next commitment.

The operating model that runs inside this framework provides:

- A consistent entry point that routes each initiative to the right stage

- Evaluation criteria that apply the same framework across all assessments in a category

- Decision gates that produce explicit outcomes rather than deferred recommendations

- Institutional memory captured as a platform capability rather than an individual responsibility

- Portfolio visibility that connects every active initiative to a leadership-ready view in real time

Together, these elements transform innovation from a collection of experiments into a managed discipline that produces compounding returns.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

The Shift from Innovation Activity to Innovation Performance

The organizations that consistently scale innovation make one clear shift — not in the tools they use or the methodologies they adopt, but in how they think about what they are building.

They move from managing innovation projects to operating an innovation system.

From running more pilots to designing the governance that makes pilots produce decisions.

From tracking activity to measuring outcomes.

From hoping individual judgment will produce consistent results to building the structure that makes consistency independent of who is in the room.

This is the difference between innovation as experimentation and innovation as a performance discipline. The operating model is what makes that shift real — not aspirational.

Frequently Asked Questions

What is an innovation operating model?

An innovation operating model is the set of shared rules, decision structures, governance mechanisms, and institutional processes that govern how innovation work moves through an organization — from market intelligence through idea capture, evaluation, piloting, and scale. It is the infrastructure that determines whether innovation compounds over time or resets with every new initiative or team change.

What is the difference between an innovation process and an innovation operating model?

A process describes the activities teams perform and the sequence in which they perform them. An operating model governs how decisions get made — who makes them, on what evidence, at what point, and with what accountability for the outcome. Process describes activity. Operating model governs decisions. Programs that run on process alone produce activity. Programs that run on an operating model produce outcomes.

Why do innovation programs stall without an operating model?

Without an operating model, innovation depends on individual judgment and heroics that do not scale. As portfolios grow, teams change, and executive attention shifts, the informal structures that kept early programs coherent break down. The result is pilot purgatory, repeated evaluations, inconsistent gate decisions, institutional knowledge loss, and leadership reporting that cannot connect activity to outcomes.

What does an innovation operating model include?

A functional innovation operating model includes five elements: a defined entry point that routes initiatives to the right stage, consistent evaluation criteria applied across all assessments in a category, explicit decision gates with defined possible outcomes, institutional memory that persists across team changes as a platform capability rather than an individual responsibility, and portfolio visibility that connects every active initiative to a leadership-ready view.

How does an innovation operating model connect to a framework?

An operating model needs a framework to operate inside. The framework defines the stages — the sequence of decisions that take an initiative from market signal to scaled outcome. The operating model governs how decisions are made between those stages — the criteria, the governance, the accountability, and the institutional memory that make each decision better than the last.

How does AI support an innovation operating model?

AI supports an innovation operating model by extending the reach of the human judgment it is built around — not replacing it. At the market intelligence stage, AI monitors technology categories no manual process could cover. At the evaluation stage, AI generates structured vendor profiles and trend reports that reduce research overhead. At the institutional memory stage, AI surfaces prior evaluations and pattern matches that inform current decisions without requiring someone to remember or find them manually.

What are the signs that an innovation program needs an operating model?

The most common signals are: pilots that never formally end, repeated evaluations of the same vendors by different teams, gate reviews that produce recommendations rather than decisions, institutional knowledge that leaves when team members leave, leadership asking what the program has produced without a clear answer, and inconsistent evaluation outcomes for similar initiatives.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- How to Design Innovation Decision Gates That Actually Work

- Decision Gates vs. Innovation Theater: How High-Performing Teams Turn Pilots Into Decisions

- Why Judgment Alone Doesn't Scale: The Case for Consistent Innovation Evaluation

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management and pilot management — with AI-generated Trend Reports, AI Company Snapshots, automatic deduplication, and decision coaching built in.

Traction AI enables unlimited vendor discovery through conversational AI scouting — no boolean searches, no manual filtering, no analyst hours. With 50,000 curated Traction Matches plus full Crunchbase integration at no extra cost, zero setup fees, zero data migration charges, full API integrations, and deep configurability for each customer's unique workflows, Traction's innovation management platform gives enterprise innovation teams the intelligence and execution capability to turn innovation into measurable business outcomes. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · Schedule a Demo · Start a Free Trial · tractiontechnology.com

.webp)