Strong post — sharp argument, great voice, well reasoned. Same treatment. Here it is:

Readiness Is Not Binary: Why "Too Early" Is the Wrong Answer in Innovation

Updated March 2026

Innovation teams hear it constantly.

Sometimes from leadership. Sometimes from operators. Sometimes from themselves.

"It's interesting — but it's too early."

The phrase sounds thoughtful. It suggests discipline, caution, and good governance. In practice, "too early" is rarely a complete answer. More often it is a convenient one — a way to defer a decision without explicitly saying so, while creating the appearance of rigor without requiring clarity.

In 2026, relying on that phrase is one of the fastest ways innovation programs lose momentum — not because teams are too conservative, but because they stop asking the questions that actually matter.

The Definition

Readiness in innovation is not a binary state — ready or not ready — but a multi-dimensional, contextual assessment of what an initiative is prepared to do, for which business context, at which level of commitment, and with what remaining uncertainties that need to be explicitly managed.

The shift from binary readiness thinking to multi-dimensional readiness assessment is one of the most consequential changes a high-performing innovation program makes. It is the difference between "too early" as a conclusion and "not yet ready for X, but ready for Y" as an actionable direction.

Why "Too Early" Feels Responsible — and Why It Isn't

Calling something "too early" allows organizations to defer a decision without explicitly saying so. It creates the appearance of rigor without requiring clarity about what would need to change for the assessment to be different.

What usually sits underneath that judgment is not a lack of belief in the technology. It is uncertainty about everything around it — ownership, integration, governance, economics, and organizational impact. Rather than surface those uncertainties and address them directly, teams collapse them into a single conclusion that closes the conversation rather than advancing it.

The result is that promising initiatives stall not because they were genuinely unready but because nobody was willing to specify what unready meant in operational terms. The uncertainty was preserved instead of resolved. And preserved uncertainty does not eventually resolve itself — it accumulates.

The Danger of Binary Thinking in Innovation

Most organizations still treat readiness as a yes-or-no decision. Either a solution is enterprise-ready or it is not. Either it advances or it stops.

That binary thinking may work for procurement decisions on established products. It breaks down quickly in innovation contexts where technologies mature unevenly across dimensions and rarely become ready across all of them simultaneously.

When teams are forced into blunt outcomes, three predictable behaviors emerge.

Promising initiatives get pushed forward prematurely to avoid the appearance of stagnation — advancing without the readiness that would make the advancement sustainable. Early-stage initiatives get quietly abandoned because they do not meet standards that were never designed for early-stage evaluation — losing potential value because the wrong criteria were applied at the wrong time. Others linger indefinitely in pilot mode — neither advancing nor formally stopping, consuming resources without producing decisions.

None of these outcomes represent disciplined innovation. They represent the organizational cost of a shared readiness language that does not exist.

Readiness Is Contextual — Not Chronological

The biggest misconception behind "too early" thinking is the assumption that readiness is primarily about timing — that a technology simply needs more time to mature before it becomes viable.

In reality readiness is far more contextual than chronological.

A solution might be appropriate for one business unit but genuinely risky in another. It might be viable in a constrained pilot environment but operationally complex at enterprise scale. It might be worth pursuing to answer a specific strategic question even when full deployment is clearly premature.

When teams treat readiness as a universal moment in time — a threshold that a technology either has or has not crossed — they lose the ability to make intentional choices about learning, risk, and investment. The question "is this ready?" implies a single answer that applies equally to all contexts. The question "what is this ready for?" opens the conversation that actually produces useful direction.

How Readiness Actually Evolves

Readiness does not arrive all at once. It accumulates across dimensions and progresses through recognizable states that support different types of decisions.

Ready to explore. The technology is interesting and the problem is real, but fundamental questions about viability remain unanswered. The appropriate action is structured exploration — a defined learning activity designed to answer the most important open questions before pilot resources are committed.

Ready to pilot. The technology has demonstrated viability in a controlled context and the key unknowns are operational and organizational rather than technical. The appropriate action is a structured pilot designed to answer a specific question about enterprise readiness.

Ready to scale. The technology has performed against defined KPIs in the pilot, operational ownership is clear, security and governance have been addressed, and the business case for continued investment is documented. The appropriate action is a scale commitment with defined integration, operating, and change management plans.

Each state answers a different question. Each requires different evidence. Each supports a different type of decision.

When organizations fail to distinguish between these states, misalignment becomes inevitable. Stakeholders argue about readiness when they are actually arguing about which decision the initiative is meant to support. Leadership asks for more evidence when the existing evidence is already sufficient for the next decision — just not for the final one. Teams defer action when what is actually needed is clarity about what the next action should be.

The Better Question Innovation Teams Should Ask

Instead of asking: "Is this ready?"

High-performing innovation teams ask: "What is this ready for — and what would need to change for it to be ready for the next decision?"

This reframe does three things simultaneously. It produces actionable direction rather than a closed judgment. It specifies what remaining work is needed rather than leaving uncertainty unaddressed. And it makes the decision-making process visible to all stakeholders — so leadership understands why an initiative is being monitored rather than advanced, and the team understands what they are working toward.

The "too early" answer ends conversations. The "ready for X, not yet for Y" answer advances them.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

Why Inconsistent Readiness Decisions Undermine Credibility

At a portfolio level, inconsistent readiness decisions are particularly damaging — and they are almost always the product of binary thinking applied inconsistently by different reviewers.

When some initiatives advance based on informal judgment while others stall without clear rationale, innovation begins to look arbitrary. Leaders struggle to understand why resources are allocated the way they are. Teams cannot explain outcomes to the people who submitted the initiatives that did not advance. Over time, confidence in the innovation function erodes — quietly at first, then visibly, until leadership starts asking whether the program is producing value proportional to its cost.

The issue is not risk appetite. Most innovation programs have sufficient organizational tolerance for uncertainty. The issue is the absence of a shared language for readiness — one that makes decisions explainable, comparable, and consistent regardless of who is reviewing which initiative in which business unit.

Consistent readiness assessment is what makes that shared language operational. Not a policy or a principle — a structured framework applied the same way across all initiatives in a category, producing outputs that are comparable and decisions that are defensible.

Building Readiness Assessment Into the Innovation Process

Readiness assessment is most effective when it is built into the workflow rather than applied ad hoc at gate reviews. This means defining the dimensions of readiness — business, technical, operational, security, economic — at the program level, specifying what evidence is required at each stage, and applying the assessment consistently before pilot commitment is requested.

When readiness is assessed at the right stage and captured as structured data, it does three things that informal judgment cannot. It makes the current state of each initiative explicit — which dimensions are strong, which have gaps, what would need to change. It produces comparable assessments across initiatives — so portfolio allocation decisions are based on evidence rather than advocacy. And it builds institutional memory — so the patterns that predict readiness in a category become visible over time and inform future assessments.

This is how the assessment compounds. The organization does not start from zero each time. It starts from what it already learned.

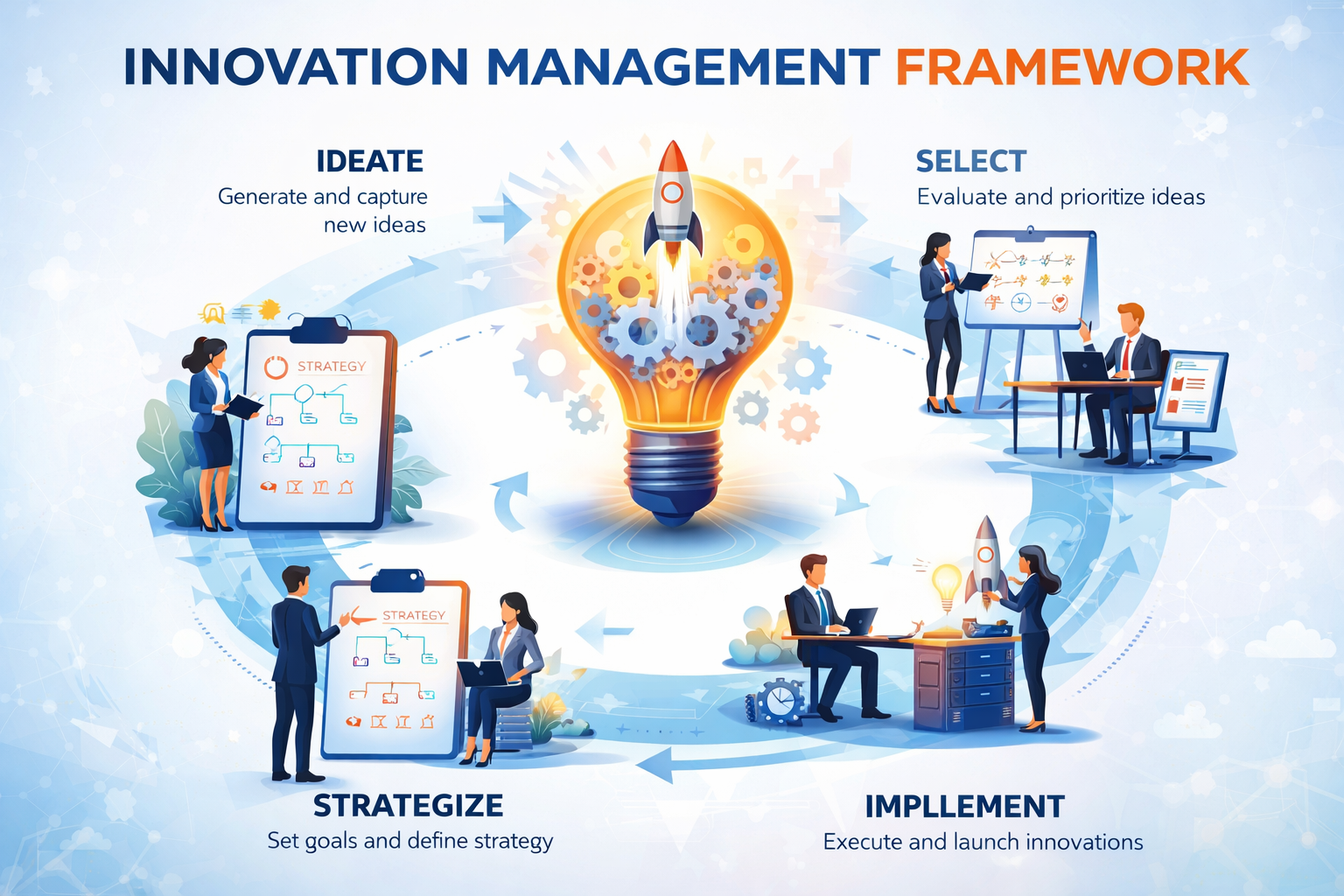

How This Connects to the Innovation Framework

The binary readiness problem is one of the structural issues the Traction Innovation Framework is specifically designed to address. The framework defines readiness across five dimensions — business, technical, operational, security, and economic — and assesses them at the appropriate stage before commitment is requested.

This gives gate reviews a structured baseline rather than a blank slate. Decisions are made against defined criteria applied consistently. "Too early" becomes "not yet ready on these specific dimensions, with these specific gaps, addressable through these specific actions" — which is a direction, not a dead end.

Download the Traction Innovation Framework guide →

Frequently Asked Questions

Why is "too early" the wrong answer in innovation?

"Too early" is a binary judgment that closes a conversation rather than advancing it. It defers a decision without specifying what would need to change for the assessment to be different. In most cases what sits underneath the judgment is not a fundamental lack of viability but unresolved uncertainty about ownership, integration, governance, or economics. Making that uncertainty explicit — and specifying what would need to be resolved — produces actionable direction. "Too early" does not.

What does it mean that readiness is not binary?

Readiness is not binary means that a technology is not simply ready or not ready — it is ready for specific types of decisions in specific contexts with specific levels of remaining uncertainty. A solution can be ready to explore but not to pilot, ready to pilot but not to scale, ready in one business unit but not another. Binary readiness thinking collapses this nuance into a single judgment that is rarely accurate and rarely useful.

How should innovation teams assess readiness?

Readiness should be assessed across five dimensions: business readiness (problem ownership and materiality), technical readiness (integration and scalability), operational readiness (process change and adoption), security and governance readiness (compliance and data handling), and economic readiness (unit economics and business case). Each dimension is assessed against defined criteria at the appropriate stage — before pilot commitment is made, not after problems surface.

What are the three states of innovation readiness?

The three states are: ready to explore (viability is unproven, structured learning is the appropriate action), ready to pilot (viability is demonstrated, operational and organizational questions need answering), and ready to scale (pilot has answered its questions, operational ownership and business case are clear). Each state supports a different decision. Misidentifying which state an initiative is in — or collapsing all three into a single yes-or-no question — is the most common source of readiness-related misalignment.

How does readiness assessment improve decision gate quality?

Readiness assessment gives decision gates structured evidence to work with rather than presentations to react to. A gate review that starts from a readiness profile — which dimensions are strong, which have gaps, what would need to change — focuses the conversation on evidence and direction rather than advocacy and negotiation. It makes the outcome of the gate predictable based on the evidence rather than dependent on who makes the most compelling case in the room.

How does consistent readiness assessment build portfolio intelligence?

When readiness assessments are captured as structured data across the portfolio, the patterns that predict success and failure in a category become visible over time. Which dimensions consistently block scale. Which business units have the highest readiness at pilot entry. Which vendor types routinely have late-surfacing governance gaps. This portfolio intelligence compounds — making each new assessment faster and more accurate because it starts from what the organization already learned.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- The Technology Readiness Gap: Why Most Innovation Pilots Fail Before They Reach Production

- How to Design Innovation Decision Gates That Actually Work

- Decision Gates vs. Innovation Theater: How High-Performing Teams Turn Pilots Into Decisions

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management and pilot management — with AI-generated Trend Reports, AI Company Snapshots, automatic deduplication, and decision coaching built in.

Traction AI enables unlimited vendor discovery through conversational AI scouting — no boolean searches, no manual filtering, no analyst hours. With 50,000 curated Traction Matches plus full Crunchbase integration at no extra cost, zero setup fees, zero data migration charges, full API integrations, and deep configurability for each customer's unique workflows, Traction's innovation management platform gives enterprise innovation teams the intelligence and execution capability to turn innovation into measurable business outcomes. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · Schedule a Demo · Start a Free Trial · tractiontechnology.com

.webp)