Good post — strong argument, clear structure, well written. Same treatment as the others. Here it is:

The Hidden Innovation Bottleneck: Idea Submission Without Context

Updated March 2026

Many innovation programs struggle long before evaluation, pilots, or decision gates.

They struggle at idea submission.

An employee responds to a clearly defined innovation challenge. The intent is good. The idea is submitted in good faith. But when the innovation team reviews it, they face a familiar problem: they have no visibility into whether this idea has been tried before, why similar initiatives stalled, or what constraints caused them to fail.

There is no accessible record. No institutional memory. No context at the moment it matters most.

The idea is evaluated in isolation — even if it represents the third or fourth attempt at the same concept in the past five years.

The Definition

The idea submission bottleneck is the point at which innovation programs lose quality before evaluation ever begins — when ideas enter the system without organizational context, historical precedent, or strategic framing, forcing review teams to rediscover lessons the organization has already paid to learn.

It is the most overlooked failure point in enterprise innovation. Most programs focus their rigor downstream — on evaluation frameworks, decision gates, and pilot governance. But the quality of everything downstream is determined by what happens at the point of submission. When the foundation is weak, no amount of downstream rigor can fully compensate for it.

Why Idea Submission Is the Most Overlooked Failure Point

Most organizations concentrate their innovation investment on the stages that are visible — the evaluation meetings, the pilot programs, the gate reviews that leadership attends. Submission looks simple by comparison. An employee fills out a form. The idea enters the system. Someone reviews it later.

But what happens at submission shapes everything that follows.

When ideas enter the system without historical or organizational context, review teams are forced to rediscover old lessons. Employees repeat ideas that failed for structural reasons they were never told about. Decisions feel arbitrary to the people who submitted them. Innovation teams become gatekeepers rather than guides.

Over time this erodes trust in the innovation process — even when intentions are good on both sides. Employees stop submitting because they never hear back meaningfully. Innovation teams become overwhelmed by volume they cannot process with consistency. The program accumulates a backlog that grows faster than anyone can address it.

The problem did not start at evaluation. It started at submission.

The Institutional Memory Gap

In most organizations, past innovation efforts live in slide decks, email threads, disconnected project management tools, and individual memory. When people change roles or priorities shift, those lessons are effectively lost.

This creates an institutional memory gap where similar ideas are re-evaluated repeatedly, known dead ends resurface under new framing, and teams spend time relearning what the organization already paid to discover.

The gap compounds as portfolios grow. A program that has been running for three years has accumulated significant organizational knowledge about what has been tried, what worked, what failed, and why. If that knowledge is not accessible at the point of submission — if the employee submitting an idea has no way to know that three similar initiatives were evaluated in the past two years — the organization is not learning from its own history. It is repeating it.

This is one of the earliest contributors to inconsistency in innovation evaluation. When ideas enter the system without shared context, similar initiatives get evaluated differently — not because the evaluation framework is broken but because the information available to reviewers varies dramatically from one submission to the next.

Why Good Ideas Still Stall at Submission

This is not an employee problem. Most employees submit ideas with positive intent and limited visibility into prior attempts, historical constraints, why similar ideas failed, and what success would realistically require in the organizational context.

Without that context, even thoughtful ideas are often misaligned with current strategy, framed around the wrong use case, proposed at an unrealistic level of readiness, or duplicative of work already in progress elsewhere in the organization.

When those ideas are rejected later, employees typically receive limited feedback and little explanation. The system unintentionally trains people to submit less informed ideas over time — because informed submission requires access to organizational context that most programs do not provide.

The employee who submits a fourth variant of an idea that has been rejected three times is not being careless. They are operating in an information vacuum that the organization has not resolved.

The Highest-Leverage Moment in the Innovation Lifecycle

The moment an idea is submitted is the highest-leverage moment in the entire innovation lifecycle. It is the point where an employee is engaged, a problem is clearly defined, and learning can be applied before time and resources are spent evaluating an idea that context would have immediately shaped.

Yet most innovation systems treat submission as a static form — capturing information without providing guidance. Context, if it appears at all, is added later by reviewers who are already operating under volume pressure.

By then the idea is already framed. The employee is already waiting. Reviewers are reacting rather than coaching. And downstream processes are forced to compensate for the absence of context at intake — which means evaluation is slower, less consistent, and more dependent on individual judgment than it needs to be.

This is where inconsistency begins. Not at the gate. At the form.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

How AI Changes Idea Submission Fundamentally

This is where AI creates a meaningful and immediate shift in program quality.

Instead of treating idea submission as a static intake step, AI allows it to become interactive and context-aware. With AI applied at the point of submission, the organization can surface relevant prior initiatives automatically — showing the employee what has been tried before in this area and what was learned. It can flag duplicates before they enter the evaluation queue rather than after a reviewer has spent time on them. It can prompt the submitter with questions that improve the quality and specificity of the idea before it is submitted. And it can validate the idea against external market signals — surfacing whether the technology or approach is gaining traction externally, losing traction, or already well-established.

The result is a fundamentally different intake experience. Instead of a form that captures an idea as stated, AI-powered intake coaches the idea toward the version most likely to be evaluated effectively — by connecting it to organizational memory, external market context, and the strategic framing that review teams need to make fast, consistent decisions.

This is not about filtering people out. It is about helping people submit smarter ideas — and giving review teams the context they need to evaluate those ideas consistently.

What Fixes the Bottleneck Structurally

The fix is not a better form. It is not more detailed submission guidelines. It is not a longer review queue.

The structural fix is connecting idea submission to institutional memory — making the organizational knowledge accumulated from prior evaluations, pilots, and decisions accessible at the moment a new idea is submitted.

This requires three things:

Structured capture throughout the lifecycle. Institutional memory is only accessible at submission if it was captured in a structured, retrievable format when prior initiatives ran. Organizations that capture evaluation rationale, pilot outcomes, and decision context as structured data — rather than in slide decks and email threads — are the ones that can surface it at intake.

AI-powered similarity detection. The volume of ideas in a mature innovation program makes manual comparison impractical. AI can identify similar prior initiatives, surface relevant findings, and flag duplicates automatically — without requiring a reviewer to have personally worked on the prior evaluation.

Feedback loops that close. Employees who submit ideas need to understand what happened to them and why. When feedback is specific — "this idea is similar to an initiative we ran in 2024 that identified X as the key constraint" — it improves the quality of future submissions. When feedback is generic or absent, it does not.

Together these elements transform submission from a static intake step into the first stage of a learning cycle that makes the entire program smarter over time.

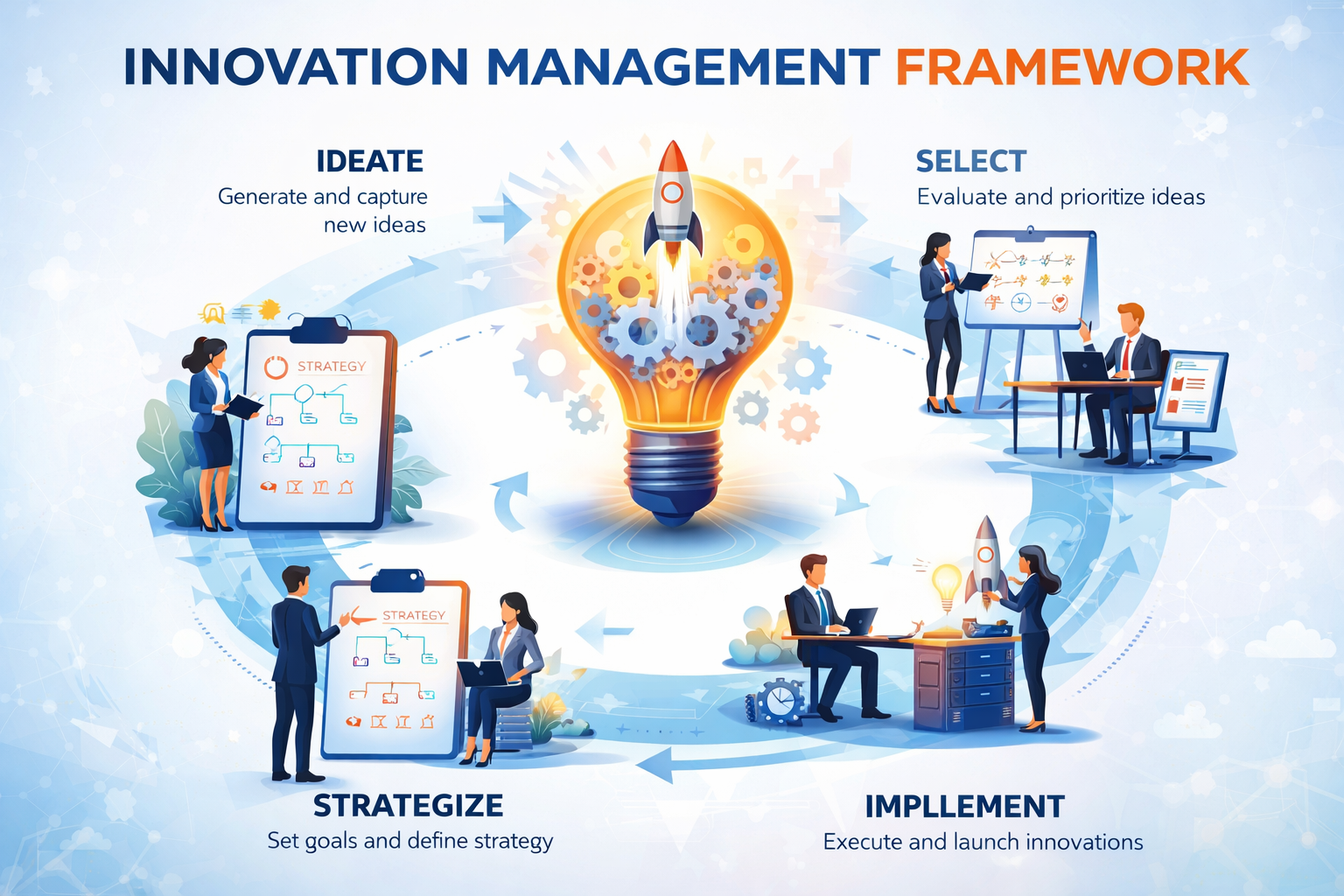

How This Connects to the Innovation Framework

The idea submission bottleneck is not an isolated problem. It feeds directly into every downstream challenge — inconsistent evaluation, arbitrary gate decisions, pilot purgatory — because those challenges are amplified when the ideas entering the system arrive without the context that would have shaped them differently.

In the Traction Innovation Framework, idea submission is treated as the first decision stage — not a passive intake step. AI-powered deduplication, prior evaluation surfacing, and market signal validation are applied at the point of submission, so ideas enter the evaluation stage already connected to the organizational and external context that review teams need.

Download the Traction Innovation Framework guide →

Frequently Asked Questions

What is the idea submission bottleneck in innovation?

The idea submission bottleneck is the point at which innovation programs lose quality before evaluation begins — when ideas enter the system without organizational context, historical precedent, or strategic framing. It forces review teams to rediscover lessons the organization has already learned, results in repeated evaluation of similar ideas, and creates the inconsistency that makes downstream evaluation and governance harder than it needs to be.

Why do good ideas stall at submission?

Good ideas stall at submission because employees have no visibility into prior attempts, historical constraints, or why similar ideas failed. Without that context, even well-intentioned ideas are often misaligned with strategy, framed incorrectly, or duplicative of work already in progress. The employee is not being careless — they are operating in an information vacuum the organization has not resolved.

How does institutional memory improve idea submission?

Institutional memory improves idea submission by surfacing relevant prior initiatives, evaluation findings, and decision rationale at the moment a new idea is submitted — before review resources are committed. When employees can see what has been tried before and what was learned, they submit more informed ideas. When reviewers have access to that history, they evaluate more consistently and make faster decisions.

How does AI improve innovation idea intake?

AI improves idea intake by making it interactive and context-aware rather than static. At the point of submission, AI can surface similar prior initiatives, flag duplicates, prompt the submitter with clarifying questions, and validate the idea against external market signals. The result is an intake process that coaches ideas toward the version most likely to be evaluated effectively — rather than capturing them as stated and leaving context to be added later by reviewers.

What is the connection between idea submission and evaluation inconsistency?

Inconsistency in evaluation often begins at submission. When ideas enter the system without shared context, reviewers are working with different information about similar initiatives — which produces different assessments. The apparent inconsistency in evaluation is frequently a symptom of inconsistent context at intake. Fixing the submission stage reduces the evaluation inconsistency downstream.

How does the hidden bottleneck connect to pilot purgatory?

The submission bottleneck feeds pilot purgatory indirectly. When ideas enter the system without adequate context, they are more likely to be misframed, misaligned, or duplicative — which means more of them stall at evaluation or reach pilots without adequate preparation. Pilot purgatory is often the endpoint of a chain that begins at submission with inadequate context and accumulates structural problems at every stage along the way.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- Decision Gates vs. Innovation Theater: How High-Performing Teams Turn Pilots Into Decisions

- How to Design Innovation Decision Gates That Actually Work

- Why Judgment Alone Doesn't Scale: The Case for Consistent Innovation Evaluation

- What Is an Idea Management Platform? What Enterprise Teams Should Actually Look For

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management and pilot management — with AI-generated Trend Reports, AI Company Snapshots, automatic deduplication, and decision coaching built in.

Traction AI enables unlimited vendor discovery through conversational AI scouting — no boolean searches, no manual filtering, no analyst hours. With 50,000 curated Traction Matches plus full Crunchbase integration at no extra cost, zero setup fees, zero data migration charges, full API integrations, and deep configurability for each customer's unique workflows, Traction's innovation management platform gives enterprise innovation teams the intelligence and execution capability to turn innovation into measurable business outcomes. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · Schedule a Demo · Start a Free Trial · tractiontechnology.com

.webp)