Why Judgment Alone Doesn't Scale: The Case for Consistent Innovation Evaluation

Updated March 2026

Experienced innovation leaders trust judgment for a reason.

Judgment reflects pattern recognition built over time. It accounts for nuance, context, and organizational reality. In the early stages of an innovation program, it often works remarkably well.

But as innovation activity scales, reliance on judgment alone begins to introduce risk.

Not technical risk — organizational risk.

Decisions become harder to explain, harder to defend, and harder to repeat. What once felt like disciplined leadership starts to look inconsistent. And confidence in the innovation function quietly erodes.

The Definition

Consistent innovation evaluation is the practice of assessing ideas, technologies, and vendors against a stable set of defined criteria — applied the same way across all initiatives in a category — so that decisions are based on evidence rather than the preferences, timing, or political influence of the individuals involved in the review.

It is not the same as rigid scoring or mechanical decision-making. It is the shared baseline that makes judgment more valuable, not less — by focusing experienced leaders on interpreting signals and weighing tradeoffs rather than debating what should matter in the first place.

When Judgment Stops Scaling

In small portfolios, judgment is reinforced by shared context. The same leaders review the same initiatives. Assumptions are understood implicitly. Tradeoffs are debated informally. Outcomes feel coherent.

That environment does not survive scale.

As innovation pipelines grow, so does complexity: more pilots running in parallel, more business units involved, more stakeholders influencing outcomes, more pressure to move quickly.

Judgment doesn't disappear — it fragments.

Different reviewers emphasize different risks. Some focus on technical feasibility, others on governance or commercial viability. Similar initiatives receive different outcomes depending on timing, sponsorship, or who happens to be in the room.

This is not a people problem. It is a systems problem. And it is the most common reason enterprise innovation programs lose executive credibility — not because they are producing bad outcomes, but because they cannot explain the outcomes they are producing.

Why Decision Gates Fail Without Consistent Evaluation

Decision gates exist to force commitment — to move initiatives forward, redirect them, or stop them deliberately. But decision gates alone are insufficient.

Without consistent evaluation criteria, gates become negotiation points rather than decision points. Evidence is selectively framed. Discussions drift toward opinion. Outcomes reflect influence rather than insight.

This is often the downstream effect of inadequate evaluation at earlier stages — when initiatives reach a gate without a shared understanding of what readiness actually means. At that point the gate hasn't failed. The evaluation model has.

The relationship between evaluation and governance is symbiotic. Consistent evaluation makes governance possible. Governance without consistent evaluation produces the appearance of rigor without the substance of it.

What Consistent Evaluation Actually Requires

Consistent evaluation does not mean every initiative is scored the same way. It means initiatives are assessed against a stable set of core dimensions, even when outcomes differ.

High-performing innovation teams evaluate initiatives through questions like:

- Is the problem clearly defined and materially important to the business?

- Is the solution viable in the intended operating context?

- Is ownership clear beyond the experimentation stage?

- Are operational, security, and governance risks visible and understood?

- Is there a plausible path to value at scale?

Not every initiative needs to excel across every dimension. Early-stage efforts may score highly on strategic relevance but poorly on operational readiness. That is acceptable — as long as expectations are explicit and the evaluation captures what is known and what remains uncertain.

Consistency does not eliminate nuance. It creates a shared baseline for judgment — which is what allows judgment to be applied reliably rather than idiosyncratically.

👉 Try Traction AI free — technology scouting and trend reports, no demo call required

Why Inconsistency Undermines Credibility

From a leadership perspective, inconsistent evaluation creates a credibility gap that is difficult to recover from.

When executives ask why one initiative advanced and another stalled, the answer should not depend on who reviewed it or how it was framed. If outcomes cannot be explained clearly and defensibly, confidence in the innovation function weakens — quietly at first, then visibly.

Over time, innovation begins to appear subjective. Or worse, political. This is how organizations end up with too many pilots, too few scale decisions, and increasing skepticism from leadership about whether the innovation program is producing anything worth the investment.

At that point innovation is no longer seen as a disciplined capability. It becomes a discretionary activity — the first thing cut when budgets tighten.

Consistent evaluation is the structural defense against this outcome. It is not about bureaucracy. It is about demonstrating that decisions are made on evidence, not on relationships.

Consistency Focuses Judgment — It Doesn't Replace It

A common concern is that consistent evaluation will constrain creativity or slow momentum. In practice the opposite is true.

When evaluation criteria are clear, judgment becomes more valuable — not less. Leaders can focus their experience on interpreting signals, weighing tradeoffs, and making decisions rather than debating what should matter in the first place. The framework handles the baseline. Human judgment handles the complexity.

This is especially important once organizations recognize that readiness is not binary. Different initiatives are ready for different decisions at different times. An early-stage technology that scores poorly on operational readiness is not a rejection candidate — it is a monitoring candidate. A pilot-stage vendor that scores well on technical capability but poorly on security posture is not a scale candidate — it is a remediation candidate.

Consistent evaluation provides the structure that allows these distinctions to be made deliberately rather than reactively.

The Portfolio-Level Advantage Most Teams Overlook

The greatest benefit of consistent evaluation emerges not at the individual initiative level but at the portfolio level.

When initiatives are evaluated using the same dimensions over time, patterns become visible that are invisible when every evaluation is bespoke:

- Which technology categories consistently produce readiness gaps at the pilot stage

- Which evaluation dimensions most reliably predict scale success

- Where the same governance or security issues are blocking multiple initiatives simultaneously

- Which business units have the highest pilot-to-scale conversion rates — and why

These patterns are the raw material of organizational learning. They are what allows innovation teams to move beyond reacting to individual pilots and begin improving the system that produces pilots.

Without consistent evaluation, every initiative is a one-off. With it, every initiative contributes to a body of institutional intelligence that makes the next evaluation faster, the next gate decision better, and the next pilot more likely to reach a conclusion.

This is how innovation programs compound — not through more activity, but through better learning from the activity they are already running.

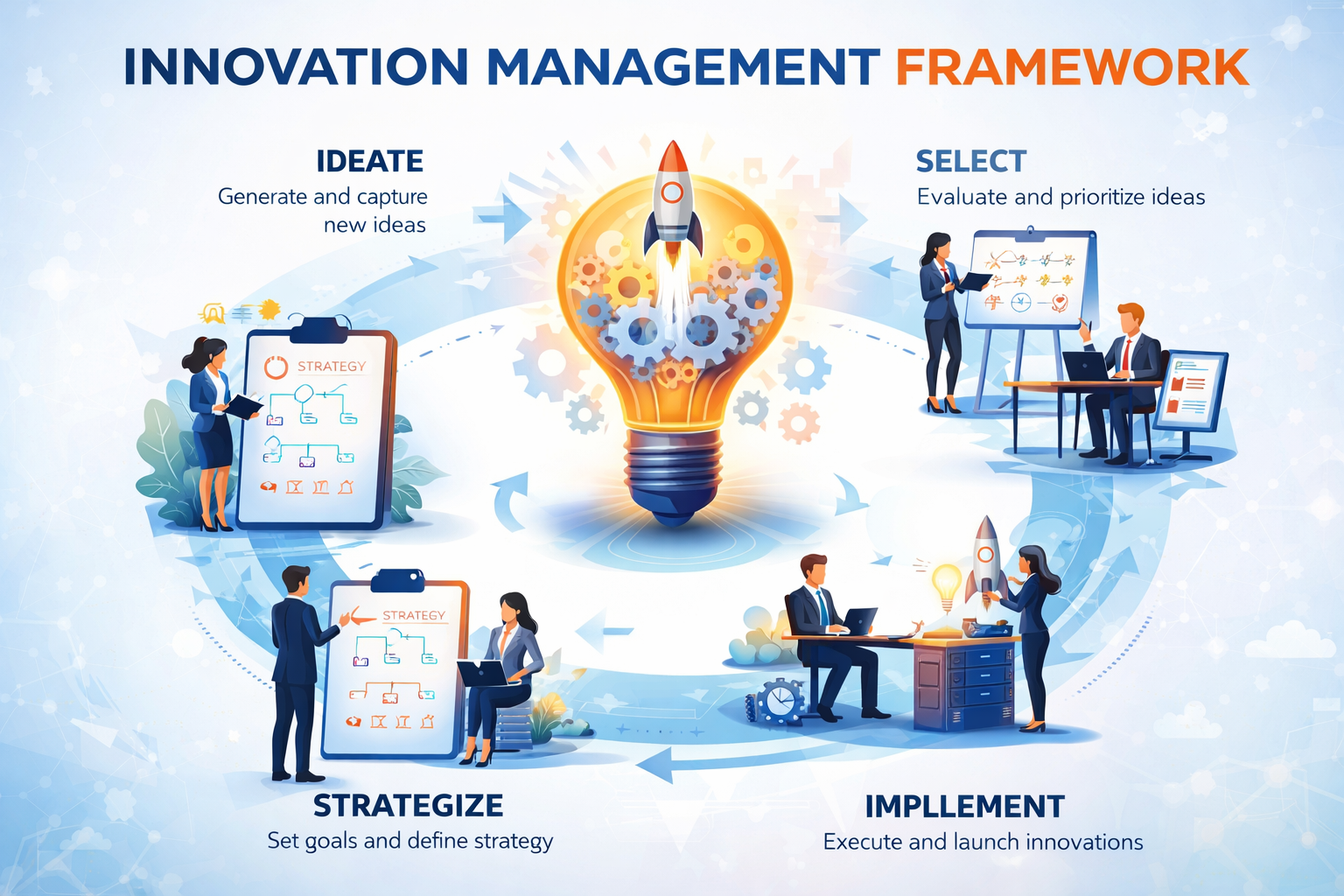

How Consistent Evaluation Connects to an Innovation Framework

Consistent evaluation does not stand alone. It is most powerful when it operates inside a connected innovation management framework where evaluation criteria are defined at the program level, applied consistently across all assessments in a category, and connected to the decision gates that determine what happens next.

In the Traction Innovation Framework, evaluation criteria are configured once and applied across every vendor, idea, and pilot assessed within a program. Assessments are structured, comparable, and captured as institutional memory — so the portfolio-level patterns that drive organizational learning are visible in real time rather than buried in spreadsheets and email archives.

Download the Traction Innovation Framework guide →

Frequently Asked Questions

What is consistent innovation evaluation?

Consistent innovation evaluation is the practice of assessing ideas, technologies, and vendors against a stable set of defined criteria applied the same way across all initiatives in a category. It ensures that decisions are based on evidence rather than the preferences, timing, or influence of the individuals involved in the review — making outcomes explainable, defensible, and comparable across the portfolio.

Why does inconsistent evaluation hurt innovation programs?

Inconsistent evaluation creates a credibility gap with leadership. When similar initiatives receive different outcomes based on who reviewed them or when the review happened, the innovation function appears subjective or political rather than disciplined. Over time this erodes executive confidence, reduces investment, and turns innovation from a strategic capability into a discretionary activity.

Does consistent evaluation slow innovation down?

No — it speeds it up. When evaluation criteria are clear and stable, teams prepare for the right evidence rather than the best presentation. Gate reviews focus on decision rather than debate. Initiatives that do not meet criteria are stopped earlier and with less friction. The overhead of subjective negotiation is replaced by the efficiency of shared standards.

What dimensions should innovation evaluation cover?

The most important dimensions are: strategic relevance — is this problem material to the business? — solution viability in the operating context, ownership clarity beyond experimentation, visibility and manageability of operational and governance risks, and a plausible path to value at scale. Not every initiative needs to excel across all dimensions — what matters is that the same framework is applied consistently so outcomes are comparable.

How does consistent evaluation connect to decision gates?

Consistent evaluation provides the inputs that make decision gates functional. Without evaluation criteria, gates become negotiation forums where evidence is selectively framed and outcomes depend on influence. With consistent evaluation, gates have the structured evidence they need to produce genuine decisions — advance, redirect, pause, or stop — rather than deferring to whoever makes the strongest case in the room.

How does AI support consistent innovation evaluation?

AI supports consistent evaluation by generating structured vendor profiles, trend reports, and assessment summaries that reduce the research overhead of each evaluation without introducing evaluator bias. AI also surfaces prior evaluations of similar technologies at the point a new assessment begins — so teams are not starting from zero and the organization's accumulated evaluation history actively informs current decisions.

How does this connect to the Traction Innovation Framework?

The Traction Innovation Framework defines evaluation criteria at the program level and applies them consistently across every vendor, idea, and pilot assessed within that program. Assessments are structured, comparable, and captured as institutional memory — building the portfolio-level intelligence that allows the organization to learn from every evaluation cycle rather than treating each one as a one-off.

Related Reading

- What Is an Innovation Management Framework? A Practical Guide for Enterprise Teams

- How to Design Innovation Decision Gates That Actually Work

- From Pilots to Performance: Why Innovation Needs an Operating Model

- Decision Gates vs. Innovation Theater: How High-Performing Teams Turn Pilots Into Decisions

- Why Pilot Management Software Is the Missing Link in Innovation Execution

- What Is Innovation Management? A Practical Definition for Enterprise Teams

About Traction Technology

Traction Technology is an AI-powered innovation management software platform trusted by Fortune 500 enterprise innovation teams. Built on Claude (Anthropic) and AWS Bedrock with a RAG architecture, Traction manages the full innovation lifecycle — from technology scouting and open innovation through idea management and pilot management — with AI-generated Trend Reports, AI Company Snapshots, automatic deduplication, and decision coaching built in.

Traction AI enables unlimited vendor discovery through conversational AI scouting — no boolean searches, no manual filtering, no analyst hours. With 50,000 curated Traction Matches plus full Crunchbase integration at no extra cost, zero setup fees, zero data migration charges, full API integrations, and deep configurability for each customer's unique workflows, Traction's innovation management platform gives enterprise innovation teams the intelligence and execution capability to turn innovation into measurable business outcomes. Recognized by Gartner. SOC 2 Type II certified.

Try Traction AI Free · Schedule a Demo · Start a Free Trial · tractiontechnology.com

.webp)